|

Charlotte Stonestreet

Managing Editor |

| Home> | AUTOMATION | >Vision Systems | >Is it time to rethink 3D vision? |

Is it time to rethink 3D vision?

16 October 2023

Venturing into the third dimension has been possible for more than 30 years, yet it still sends a frisson of trepidation through the hearts of many a production team. Nathaniel Hofmann argues it’s time to let go of our apprehensions once and for all

IN THE space of a few years, the different potential ways to build positioning, inspection, measuring and code-reading applications with machine vision have multiplied. The commercial gains are there for the taking: robust quality inspection increases yields and reduces waste; accelerated throughput boosts productivity. A process that automatically recognises real-time product changes improves manufacturing flexibility and saves infrastructure costs. Automating with robots increases throughput and reliability. Add digitised data and you can trend process quality data, manufacturing performance and asset health.

Yet, despite the opportunities, the road to 3D is littered with switched-off systems that were quick to set up but failed to meet the longer-term need. Equally, there are still many factories where manual quality inspection persists, often staffed by superstar product checkers of a generation heading for retirement.

Specification is key

Could it be time to revisit 3D? If you decide to take the plunge, it’s right to be cautious given the level of choice and sophistication. A cast-iron specification has always been crucial. Well-planned solutions will be adaptable to changes in the future.

First, get your priorities in the right order. Start with the appropriate technology, then consider the complexity and you will justify the Return on Investment. However, if you start with the price, then favour simplicity over functionality, you may end up with a limited or redundant system that needs to be replaced much sooner than you hoped.

3D imaging technologies

Capturing the third dimension is achieved using either scanning or snapshot machine vision technologies. The oldest technique, laser triangulation, uses a camera positioned at an angle to capture images of a laser line, creating a succession of ‘slices’ of the object as it moves along a conveyor to create a profile of its 3D shape. Time-of-Flight 3D cameras create a 3D point cloud in a single snapshot without the need to move the camera or the object, by calculating the distance that light travels and returns from the target shape. The most recent advance in stereo technology enables a camera to work like human eyes to combine two 2D images to create depth. All these technologies have pros and cons depending on the automation system, the distance from the object and the lighting.

2D or 3D? – that is the question

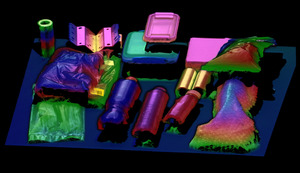

Do you need to use 3D at all? Well, that depends, because the images reveal different things about an object. Take the 2D images in fig1. If you just want to confirm that a lid has been added, for example, then you won’t need 3D. Try to check the print with a 3D image and it will be invisible. If want to see how flat the lid is, or need to know the height of the box so a robot can pick it without fear of damage, or you want to confirm that the contents are complete, then 3D is the right technology.

Fig 1: 2D v 3D imaging

If you need height information, i.e. data in the Z axis, as well as x and y, then the application is more likely to need 3D. Even so, it is possible that what is called 2½D may suffice. In 2½D, creating a grey level representation of the height image, or depth map, may be sufficient when you don’t need to know the entire shape of the object. Even with fully 3D capable cameras, many applications still only use 2½D, because there is a fraction of the image data to process compared to a full 3D point cloud.

Parallel tracks

While some users do not have the knowledge, or the time, to attempt a 3D integration using their own raw data, others want to output streamed data and integrate it into their own automation. For this reason, the 3D vision market is developing along two parallel tracks. This is an approach that SICK has pioneered.

Whichever path you choose, being able to configure the right vision toolset and progress rapidly to completely-reliable operation is vital. SICK’s machine vision hardware portfolio is therefore supported by just two software streams, all the way from tiny, intelligent sensors through to high-performance 3D streaming cameras and Sensor Integration Machines,

Developments in programmable 3D cameras are enabling more 3D vision tasks to be processed onboard the device. Machine vision inspections, code readers and robot guidance tasks can all be set up in a matter of hours or days using easy-to-use drop-down tools and a common familiar graphic interface.

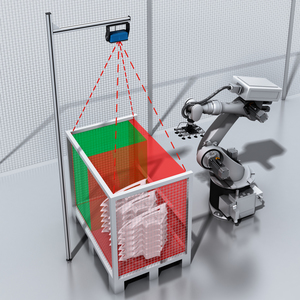

SICK Nova Foundation Software

With SICK’s Nova foundation software, it’s simple to run a ready-made sensorApp directly onboard our smart devices. You can access the tools you need for specific tasks from a drag-and-drop menu. For example, the SICK Visionary-T Mini AP time-of-flight snapshot camera can be used with SICK’s Nova 3D Presence Inspection sensorApp to master tasks like emptiness checks in bins, totes and crates, or presence detection of objects in 3D scenes, as well as simple measurements and quality tolerance checks.

3D quality checks for completeness frequently need several, more advanced, machine vision software tools, but using the standard downloadable Presence Inspection toolset, they become easy to configure. The camera outputs results to the control system, such as confirming all parts are present and at the right locations, or verifying a set of measurements.

If your application demands more, a 3D streaming camera will deliver complete flexibility. True, you will need to commit to the costs and development time, but, with so much potential, try not to be daunted by the complexity. The good news is that the latest cameras are now providing a fast track for integrators to harness the unmatched speed and measurement precision of 3D imaging technology.

The SICK Ruler3000, for example, combines SICK’s groundbreaking Ranger3 streaming camera with a Class 2 eye-safe laser, pre-selected optics and factory-calibrated geometries to enable much simpler configuration and commissioning. With industry-standard compliance giving comprehensive access to machine vision software tools, the Ruler3000 dramatically cuts time and complexity when integrating more demanding inspection, measurement and robot guidance tasks across a wide range of industries.

Quality inspection

Using a streaming camera offers almost unlimited image processing options; the more tools you use, the greater the possibilities. You can apply several machine vision tools together and combine the results from multiple cameras. With ability to calibrate or localise images together, you can fuse point clouds to form one data set.

There’s never been a more exciting time to embark on a 3D Machine Vision journey. Before you dive in, take time to develop the right specification. Then, partner with a machine vision specialist like SICK with a broad scope of cross-industry experience, who can advise across the hardware and software spectrum. Don’t be disheartened. The options could turn out to neither be as limited, nor as complex, as you feared.

Nathaniel Hofmann is market product manager for machine vision at SICK

Key Points

- With 3D vision it is important to start with the appropriate technology, then consider the complexity to justify RoI

- Different technologies have pros and cons depending on the automation system, distance from the object and lighting

- Developments in programmable 3D cameras are enabling more 3D vision tasks to be processed onboard the device

- On-premise data intelligence platform

- High-Integrity Series Connection

- Smaller scanners for machine guarding - the factors that matter

- Safety-certified encoder

- Swipe-and-set-up touchscreen sensor

- SICK certified great place to work

- Vision of a software-driven future

- Keeping safety in the loop

- Plug-in safety light curtains

- Get started with IO-Link on the PLC

- Turnkey hovercraft drivetrain guarding

- More Ways of Identifying Objects

- HD Machine Vision

- Bespoke Vision Sensor Packages

- B&R NEW WEBSITE

- View Images & Overlay Graphics

- Get A Lock On 3D Measurement

- Entry-level vision system

- Multipix will launch the NEW Datalogic MATRIX 450

- Process, print and packaging inspection systems on show